The State Explosion Problem

A non-hierarchical, "flat", design results in too much detail to comprehend all at once.

When you write out all of the possible states of a system, along with all possible transitions, the result looks very complicated and hard to understand.

A trivial example follows. The example is purposefully simple, but, gives a feel for how the complexity of a system increases due to the combinatorial effects of interactions between states.

The existence of the state explosion problem doesn’t mean that reasoning about a system in terms of its state must be avoided, just that some kind of structuring principle is needed.

In fact, this kind of reasoning happens all of the time in software development.

Example

Lamp

Lamp with 2 momentary push buttons

On/Off

Brightness Low/Medium/High

State Explosion

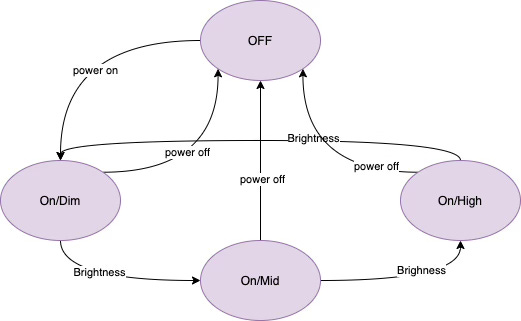

If we simply draw a diagram that shows all possible states and transitions between them, the diagram is hard to read due to the “state explosion” problem. In the ON state, there are 3 possible sub-states - Dim, Mid, High. Each of those states has possible transitions on “power off” to the OFF state.

This is a simple example. The state explosion problem becomes increasingly tangled in more complicated software architectures.

Layering - HSM - Hierarchical State Machine

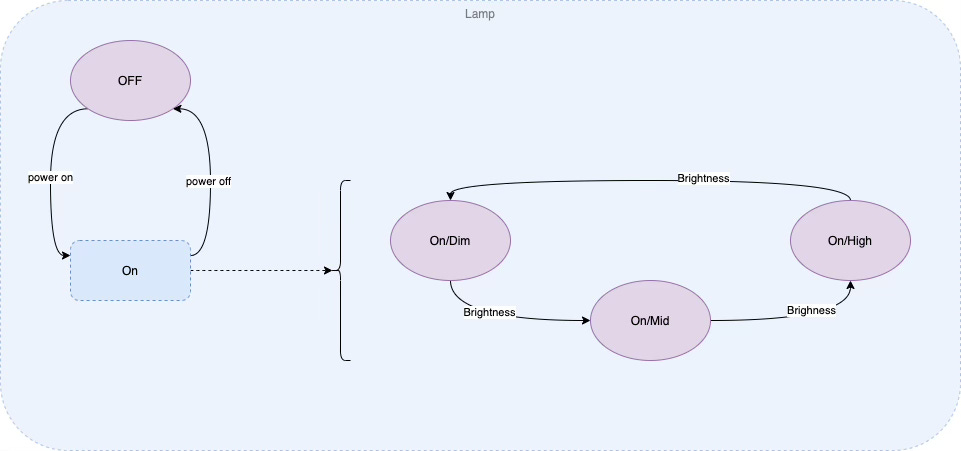

We can redraw the diagram to show the 3 sub-states as a sub-statemachine of the ON state. This diagram is easier to read.

We can treat the sub-state as an orthogonal state machine. In software terms, the OFF/ON machine is a thread and the Dim/Mid/High machine is another independent thread.

The OFF/ON machine overrides the actions of the child Dim/Mid/High machine. For example, if the OFF/ON machine transitions from ON to OFF, then the sub-machine exits regardless of what state it is in. If the sub-machine were to contain other sub-machines, then all of the sub-machines would be controlled by the parent machine (OFF/ON in this simple example). When the parent machine changes state, all submachines exit their state - in a deepest-first manner.

This behaviour is the inverse of how inheritance in OO works. The parent machine controls the children machines in HSMs (Hierarchical State Machines). In OO, though, children can control the actions of the parent machine via method overriding. OO inheritance is reasonable for data structuring, e.g. graphics data, but, OO inheritance is not reasonable for control-flow structuring, as it may result in control-flow “surprises” that make debugging and reasoning about control flow difficult.

This kind of structuring was originally suggested by Harel in the 1987 StateCharts paper. I call this kind of inverse-inheritance Parental Authority. Harel did not give it an explicit name.

From a low-level software perspective, this is concurrency with message passing. The messages implied by Harel’s notation are messages about state changes.

"Concurrency" is called "orthogonal states" in StateChart notation.

Statechart notation implies that there is a high-priority message that can cause inner states to abort. In modernized versions of these ideas, such as 0D, it turns out that priority messages are not generally required, and such behaviour can be explicitly designed in by Software Architects.

Reasoning About Programs

It is popular to imagine that the phrase “reasoning about programs” implies the sole use of mathematical, synchronous approaches.

Academics and programming researchers favour the synchronous approach. Approaches for applying these techniques have grown slowly over some 5 decades.

Practitioners, though, favour a different approach to reasoning about programs, for example using debuggers and using various concise programming language syntaxes.

Synchronous Function Based Reasoning

One approach to organizing complicated software is to use synchrony and sequential operation, i.e. to use synchronous programming languages. This technique is currently in vogue in programming languages such as Rust, Haskell, Javascript, Python, etc. The drawback of this technique is that a system’s complexity must still be dealt with in some manner and often leaks out in the form of global variables and intricate sequences of functions that pass parameters to one another while restricting the expression of possible states of the system through over-use of synchronization.

Functional programs are chopped up into smaller state machines under-the-hood through the use of preemptive operating systems. This approach takes the decision of how to structure state machines out of the hands of programmers and requires software and hardware scaffolding to support the paradigm, e.g. MMUs, context-switching, increased memory requirements, etc.

Preemption saves the state of a functional program on a dynamic stack. Functional programs do contain state, but that state is hidden from programmers.

The scaffolding needed to support the functional paradigm inserts inefficiency into programs built this way. This inefficiency tends to remain hidden and is overlooked by most programmers.

The Functional paradigm does not prescribe a way to compose systems in a scalable manner. Any function can be call any other function. This problem is exacerbated by the use of a technique called CPS - Continuation Passing Style - which is essentially a modernized form of unrestricted GOTO.

State Machine Based Reasoning

Another approach to dealing with the state explosion problem is to allow programmers to decompose systems into sets of isolated state machines, each with a simple, stand-alone structure while sending messages about internal state changes between state machines. Such decomposition often involves placing isolated state machines in separate, completely isolated threads. Each thread encapsulates control flow as well as data. Isolated state machines can send data to each other, but cannot transfer control-flow (say, by using function calling).

This is usually called message passing. The phrase “message passing” has, though, historically, been muddied and overloaded to include synchronous method calling in object-oriented languages. Words and phrases such as reactive and main loop have been used in an attempt to differentiate techniques, but, also, end up being conflated with synchronous approaches.

Isolated state machines do not transfer control flow between one another, as is done with traditional synchronous, function-based programming languages. In function-based programming languages, a function passes control flow to another function by calling it. The caller suspends its own execution while the callee executes. The caller must synchronize with the callee and must wait for a result value, or, wait for some kind of signal that the callee has terminated execution.

It is not difficult to use threads to help organize the states of a system, but traditional synchronous programming languages make it appear to be difficult to use threads.

At the basic level, the use of state machines does not necessarily imply a way to organize and structure scalable systems. A method for organizing systems in a hierarchy of orthogonal states was documented in Harel’s 1987 paper about Statecharts. Computer hardware of the day could not easily handle the suggested syntax (mostly graphical) and the technique was generally overlooked. Instead, the programming community continued to use and still continues to use the synchronous approach to solving problems and uses outdated programming languages ideas with syntax based solely on Gutenberg type-setting principles.

Actors

Other approaches, such as Actors, have been suggested, but, tend to be implemented in a synchronous manner, crippling the advantages of the approach.

The Actor paradigm does not prescribe a way to compose systems in a scalable manner.

Bloatware

The synchronous programming language approach has caused its own form of state explosion, more commonly known as bloatware.

Modern operating systems are unmanageably large, e.g. Windows® clocks in at about 50,000,000 lines of code. One line of code often unrolls into a sequence consisting of many CPU-level opcodes.

Memory sizes in computers have increased dramatically. It is generally accepted that computers must have main memory sizes measuring in the Gb range and cache sizes in the Mb range.

I prompted Clause 3.5 about memory sizes. Claude 3.5 Sonnet is an LLM - a large language model form of AI. From its responses regarding memory size, one can conclude that memory size has grown significantly due to richer forms of media data, but, code size has, also, grown significantly in a pattern that resembles a super-linear curve. One can conclude that the growth in code size is not directly proportional to the growth in data size nor application capabilities, i.e. code bloat is not directly tied to useful increases in data size.

Claude 3.5 Reports:

Program sizes vary dramatically depending on the type of software:

**Small Utility Applications:**

- Command-line tools: 100KB - 10MB

- Simple mobile apps: 5MB - 50MB

- Basic desktop utilities: 10MB - 100MB

**Medium-sized Applications:**

- Office productivity software: 200MB - 2GB

- Standard mobile apps with features: 50MB - 300MB

- Web browsers: 200MB - 500MB

- Development environments (IDEs): 500MB - 2GB

**Large Applications:**

- Modern video games: 10GB - 200GB+

- Professional creative suites (video/audio/graphics): 2GB - 20GB

- Enterprise software: 1GB - 10GB

**Operating Systems:**

- Mobile OS: 4GB - 10GB

- Desktop OS: 15GB - 50GB

The actual memory footprint during runtime is typically much smaller than the installed size, with most programs only loading necessary components into RAM. A modern application might use anywhere from 50MB to several GB of RAM while running, depending on its complexity and current operations.

For executables themselves (the actual .exe or binary files), sizes typically range from:

- Small utilities: 1MB - 20MB

- Medium applications: 20MB - 200MB

- Large applications: 200MB - 2GB+

However, the bulk of installed size often comes from assets like graphics, audio, databases, and libraries rather than the executable code itself.

Code size versus data size varies significantly across different types of applications, but there are some general patterns:

**Code Size Proportions:**

- For basic utilities and business applications: Code typically accounts for 5-20% of the total program size

- For development tools and frameworks: Code might represent 20-40% of total size

- For embedded systems: Code could be 50-90% of the total size

**Data Size Proportions:**

- Games: Often 90-99% data (textures, models, audio, video)

- Media applications: 80-95% data (sample content, assets, libraries)

- Business software: 60-90% data (templates, UI assets, help documentation)

Some specific examples:

- A typical modern video game might have 50-100MB of actual executable code but 50-200GB of assets and data

- A professional photo editing application might have 200-500MB of code but several GB of filters, presets, and sample content

- A modern web browser might have 100-300MB of core executable code with additional data for extensions, caches, and UI elements

For mobile apps, the ratio has been shifting even more toward data as apps include more rich media assets while the core functionality remains relatively compact.

In memory usage during runtime, the proportion can shift dramatically depending on what the program is doing. For example, a database application might load a small amount of code but process gigabytes of user data.

Modern mobile devices have varying memory requirements based on their intended use and operating systems:

**Smartphones (2025)**:

- Entry-level: 4GB-6GB RAM

- Mid-range: 8GB-12GB RAM

- Flagship: 12GB-16GB RAM

- Premium/Gaming: 16GB-24GB RAM

**Tablets**:

- Basic: 4GB-6GB RAM

- Mid-range: 8GB RAM

- Premium: 12GB-16GB RAM

- Pro models: 16GB-24GB RAM

**Smartwatches**:

- Basic: 512MB-1GB RAM

- Full-featured: 1GB-3GB RAM

- Premium: 2GB-4GB RAM

**Portable Gaming Devices**:

- Budget handhelds: 4GB-8GB RAM

- Premium handhelds: 16GB-32GB RAM

**E-readers**:

- Standard: 512MB-1GB RAM

- Premium: 1GB-2GB RAM

**Factors driving memory requirements**:

- Multitasking capabilities

- Camera resolution and processing

- AI/ML features for on-device processing

- Gaming performance

- OS overhead (Android typically requires more RAM than iOS)

- App complexity and background processes

- Display resolution

Memory management has improved significantly, but the trend continues toward higher RAM configurations as apps become more sophisticated and users expect smoother multitasking experiences.

Code size has grown substantially over the past few decades, but the growth pattern is more nuanced than purely exponential.

Different types of software have shown different growth patterns:

**Operating Systems**:

- The Linux kernel has grown from approximately 10,000 lines of code in 1991 to over 30 million lines by 2023

- Windows has similarly expanded from a few million lines in Windows 3.1 to estimates of 50-100 million lines in modern Windows versions

**Applications**:

- Web browsers have grown from hundreds of thousands of lines to tens of millions (Chrome is estimated to contain 25+ million lines)

- Office suites have expanded from 1-2 million lines to 10+ million lines

This growth reflects several factors:

1. **Feature expansion**: Software continuously adds new capabilities

2. **Abstraction layers**: Modern code builds on increasingly complex frameworks

3. **Hardware capabilities**: More powerful hardware enables larger, more complex software

4. **Platform support**: Supporting multiple platforms increases codebase size

5. **Security and reliability**: More code dedicated to error handling, security, and edge cases

The pattern resembles more of a super-linear growth curve rather than purely exponential. There have been periods of rapid expansion followed by consolidation or refactoring. Additionally, modern development practices like microservices and containerization have changed how we measure "program size" as functionality becomes more distributed.

Software modularity has also improved, allowing developers to manage larger codebases through better organization and encapsulation.

Further Reading

Another description of state explosion can be found in this article at statecharts.dev.

See Also

References: https://guitarvydas.github.io/2024/01/06/References.html

Blog: guitarvydas.github.io

Videos: https://www.youtube.com/@programmingsimplicity2980

Discord: https://discord.gg/65YZUh6Jpq

Leanpub: [WIP] https://leanpub.com/u/paul-tarvydas

Gumroad: tarvydas.gumroad.com

Twitter: @paul_tarvydas

Substack: paultarvydas.substack.com